T.I. pushes people to government funding to purchase land

People are being encouraged by T.I. to buy land using the funds they receive from the government. On Wednesday, the emcee decided to discuss financial suggestions

People are being encouraged by T.I. to buy land using the funds they receive from the government. On Wednesday, the emcee decided to discuss financial suggestions

Daniel Lewis Lee died by deadly infusion on Tuesday (July 14) in the nation’s first government execution since 2003. White supremacist Daniel Lewis Lee was killed on Tuesday morning (July 14) in the nation’s first government execution in quite a while. The 47-year-elderly person was executed by deadly infusion at a government jail in Terre Haute, Indiana, CBS reports.

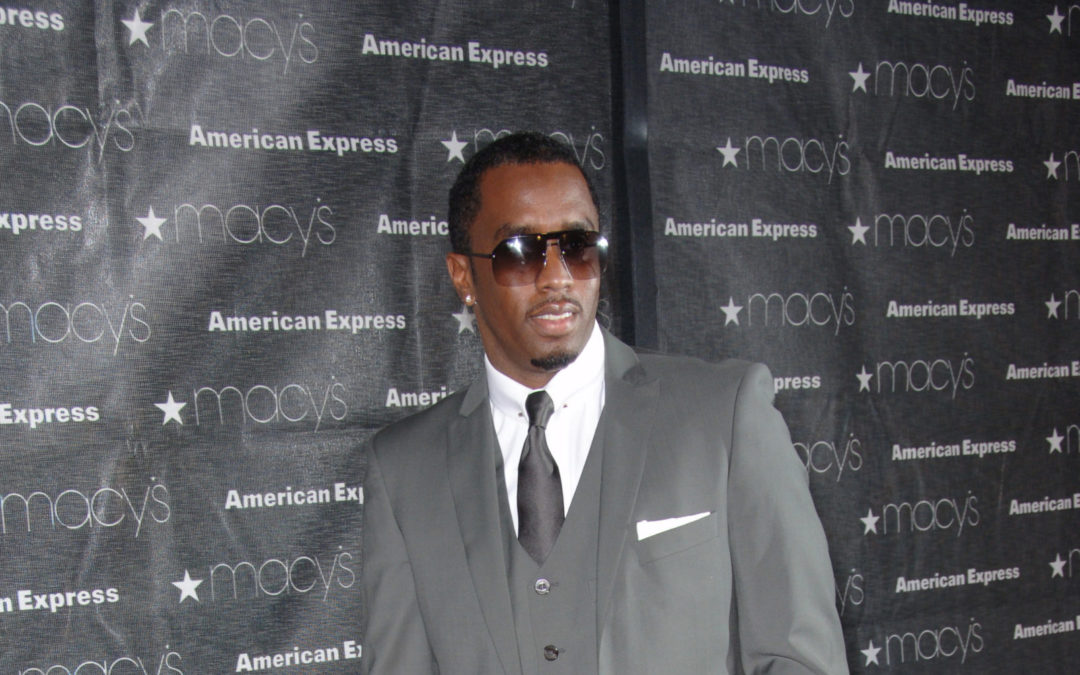

Image Editorial credit: Featureflash Photo Agency / Shutterstock.com

Renowned music mogul Diddy found himself on the receiving end of unexpected home raids on a recent Monday. The houses in question were his luxurious abodes in Los Angeles and Miami. This event has sent shockwaves through the music industry and fans alike.

The raids were carried out by federal agents, who confiscated several electronic devices from both properties. The operation was conducted by the Homeland Security, who swooped down on the mansions following court-authorized searches issued in Manhattan’s federal court.

Diddy, whose real name is Sean Combs, had his residences turned inside out as agents went about their investigations. His sons, Justin and King, were temporarily detained outside the Los Angeles home, adhering to the standard protocol in situations such as these.

The impetus behind these sudden raids was a sex trafficking investigation opened against Diddy. This investigation commenced after four women came forward with civil lawsuits, leveling serious allegations of rape, abuse, and sex trafficking against the music star.

Federal prosecutors directed Homeland Security Investigation agents to gather evidence to substantiate the women’s accusations. This led to the raids on Diddy’s homes and the seizure of his electronic devices.

Diddy’s legal representative, Aaron Dyer, was swift to condemn the federal government‘s actions. He criticized the use of “military-level force” during the execution of the search warrants and expressed his disapproval of the treatment meted out to Diddy’s children and staff during the incident.

“Yesterday, there was a gross overuse of military-level force as search warrants were executed at Mr. Combs’ residences,” Dyer said.

Dyer further stated that while Diddy cooperated with the authorities, neither he nor any of his family members were arrested or had their ability to travel curtailed. He lambasted the media’s speculative coverage of the event and referred to the investigations as a “witch hunt based on meritless accusations.”

As of now, no criminal charges have been filed in the case. Diddy continues to vehemently deny all allegations of abuse and assault. However, the fact remains that his electronic devices have been seized, and they may hold key evidence that could either implicate or exonerate him.

The repercussions of this case are sure to reverberate through the music industry. Diddy, a prominent figure in the music world, commands a large following. His influence extends beyond his own music to the artists he’s nurtured and the successful business ventures he’s spearheaded. The outcomes of this investigation will undoubtedly affect his standing in the industry.

The case against Diddy is a stark reminder of the intersection of fame, power, and accountability. It brings to the fore the critical importance of legal scrutiny, no matter how high the accused may stand in societal rankings. As the legal proceedings unfold, all eyes will be on the outcome, which may set a precedent in the music industry and beyond.

At this point, the only certainty is the uncertainty of what the future holds for Diddy. However, the music mogul’s fans and critics alike await the conclusion of the investigation, hoping for a fair and just resolution.

The music world was left reeling when news broke about Keefe D‘s request for freedom from prison before his forthcoming trial. Prosecutors, however, are voicing their strong opposition, labeling him a significant threat to society. In this article, we delve into the details, shedding light on the dark corners of the case that continues to grip the attention of Music News enthusiasts worldwide.

According to recent reports, Duane “Keefe D” Davis, the alleged orchestrator of 2Pac’s murder, requested to be released from custody on his own recognizance before his scheduled June trial. If this request fails, his legal team plans to advocate for a bail not exceeding $100,000.

Based on court documents TMZ obtained, prosecutors argue that Davis’ request is unjustified. They’ve listed several reasons to deny his release before the trial. Among these are his former status as a high-ranking South Side Compton Crip, his repeated admissions about his involvement in 2Pac’s murder, and allegations of threats made to witnesses while in custody.

Prosecutors highlight Keefe D’s past as a high-ranking South Side Compton Crip. They underline the danger he might pose to society, considering his connections and influence within this notorious gang.

According to the prosecutors, Keefe D’s repeated admissions concerning his role in 2Pac’s murder are another strong reason against his pre-trial release. The prosecutors continue to argue that there is substantial evidence pointing to Davis’ orchestration of the 1996 crime.

The prosecutors have also brought up allegations of threats made by Davis to witnesses while in custody. If proven true, these allegations could significantly impact Davis’ plea for bail.

Factoring in all these elements, the state of Nevada is asking the court to keep Davis in prison until the start of the trial. They believe that the gravity of the charges and the potential risks involved merit such a decision.

Despite the case against him, Davis pleaded not guilty to his involvement in 2Pac’s death last month. He had already made two court appearances, but his attorney failed to show up.

Earlier this month, 2Pac’s biological father, Billy Garland, shared his thoughts on Davis in an interview. According to Garland, Davis was merely a pawn in a larger game. He believes that Davis was exploited by various entities, including the government, the justice department, the LAPD, the Las Vegas Police Department, and others.

“He’s always been a tool, and there’s just time that they used him for what they wanted to use him,” he said. “Anytime a Black man gets strong that has the potential to lead other Black people, he’s not gonna survive.”

As the case continues to unfold, fans of 2Pac and followers of Music News will be watching closely to see how this dramatic saga ends. Will Keefe D be granted bail, or will the prosecutors’ arguments prevail? Only time will tell.

Image Credit: lev radin / Shutterstock.com

In a shocking revelation, five former officers of the British police force have publicly admitted to circulating racist messages about Prince Harry and Meghan Markle, the Duke and Duchess of Sussex. This unsettling news has once again brought to the forefront the issue of racism that has been a longstanding concern within the British establishment.

The five officers – Robert Lewis, Peter Booth, Anthony Elsom, Alan Hall, and Trevor Lewton – confessed their guilt at London’s Westminster Magistrates’ Court. Their charges included sending grossly offensive racist messages via public communication.

A sixth officer, Michael Chadwell, declined the identical charge and is expected to return to court on November 6. The rest are scheduled for sentencing on the same day.

These officers were previously part of London’s Metropolitan Police Force, serving in the Parliamentary and Diplomatic Protection branch, responsible for safeguarding politicians and diplomats.

The officers were arrested and charged following a BBC investigation last year that uncovered their clandestine activities. These included the circulation of offensive and racist messages in a closed WhatsApp group. Their derogatory comments targeted not only the Duke and Duchess of Sussex but also other members of the royal family, such as Prince William, Kate, the late Queen Elizabeth II, and her late husband, Prince Philip. High-profile political figures like U.K. Prime Minister Rishi Sunak, former interior minister Priti Patel, and former Health Secretary Sajid Javid were also victims of their contemptuous remarks.

The messages were circulated between 2020 and 2022, a period during which none of the accused were serving as officers. According to Newsweek, their conversations also included discussions about the U.K. government’s plans to deport asylum seekers to Rwanda and the floods in Pakistan.

This incident is not an isolated one. In 2022, two active officers were discharged from service when their racist conversations on WhatsApp were revealed publicly. Shockingly, one of these messages was even circulated during the royal wedding of Prince Harry and Meghan Markle in 2018.

These racist incidents significantly contributed to the decision of the royal couple to relocate to America in 2020. They stepped away from the monarchy, citing the high tension between members of the royal family, the royal institution, and the British tabloid press.

In the 2021 documentary series, The Me You Can’t See, Prince Harry revealed to Oprah Winfrey that the media played a significant role in fostering racial hatred against his wife. He expressed regret over not taking a firm stand against racism earlier in his relationship with Meghan.

RECENT COMMENTS